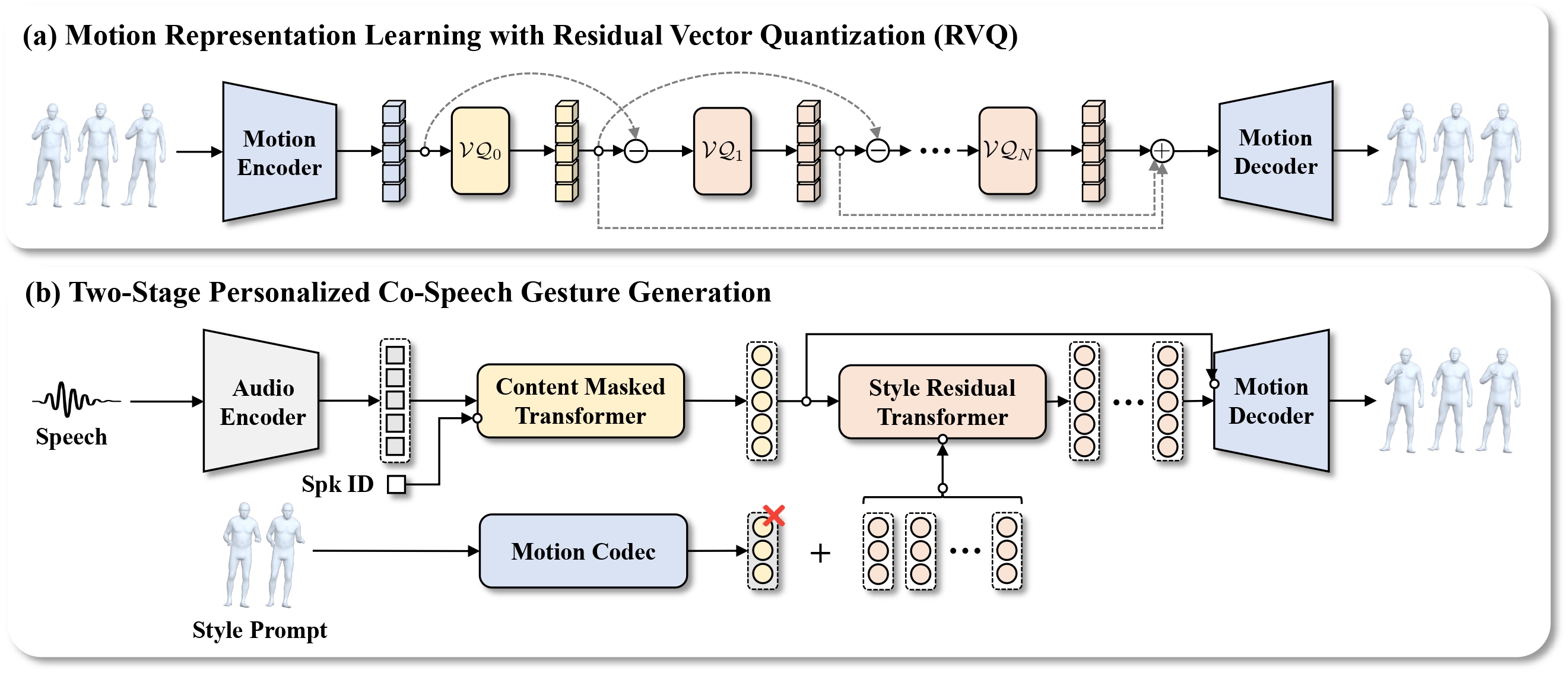

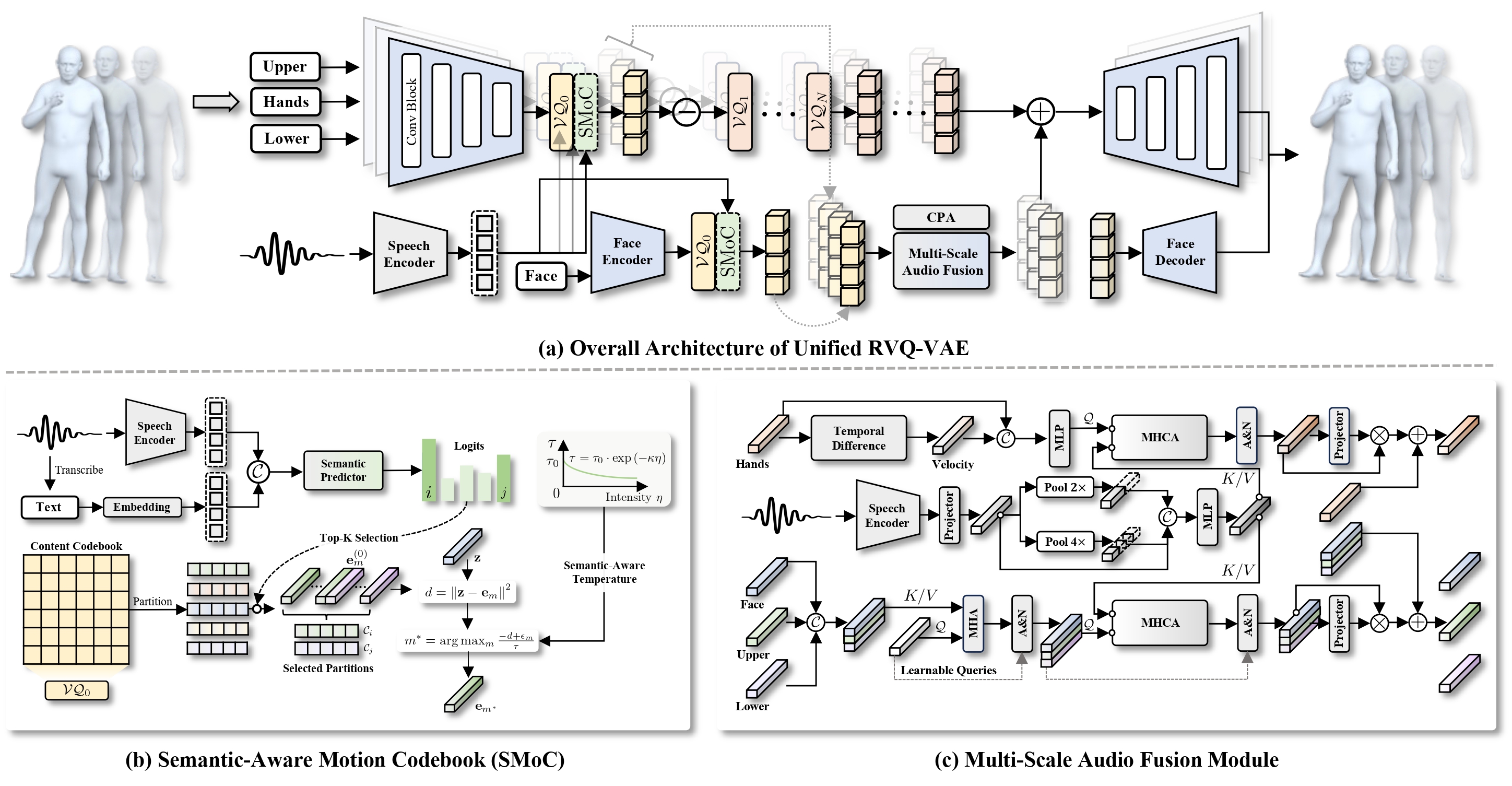

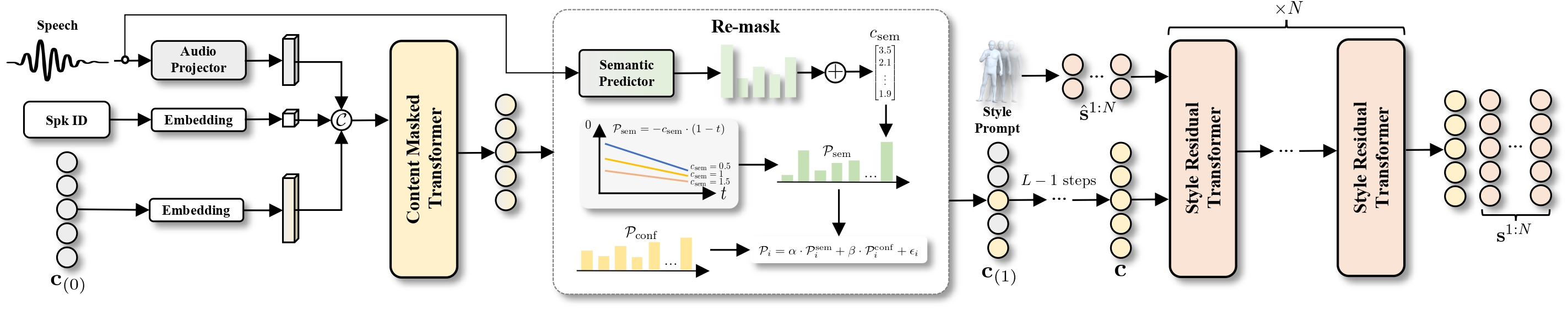

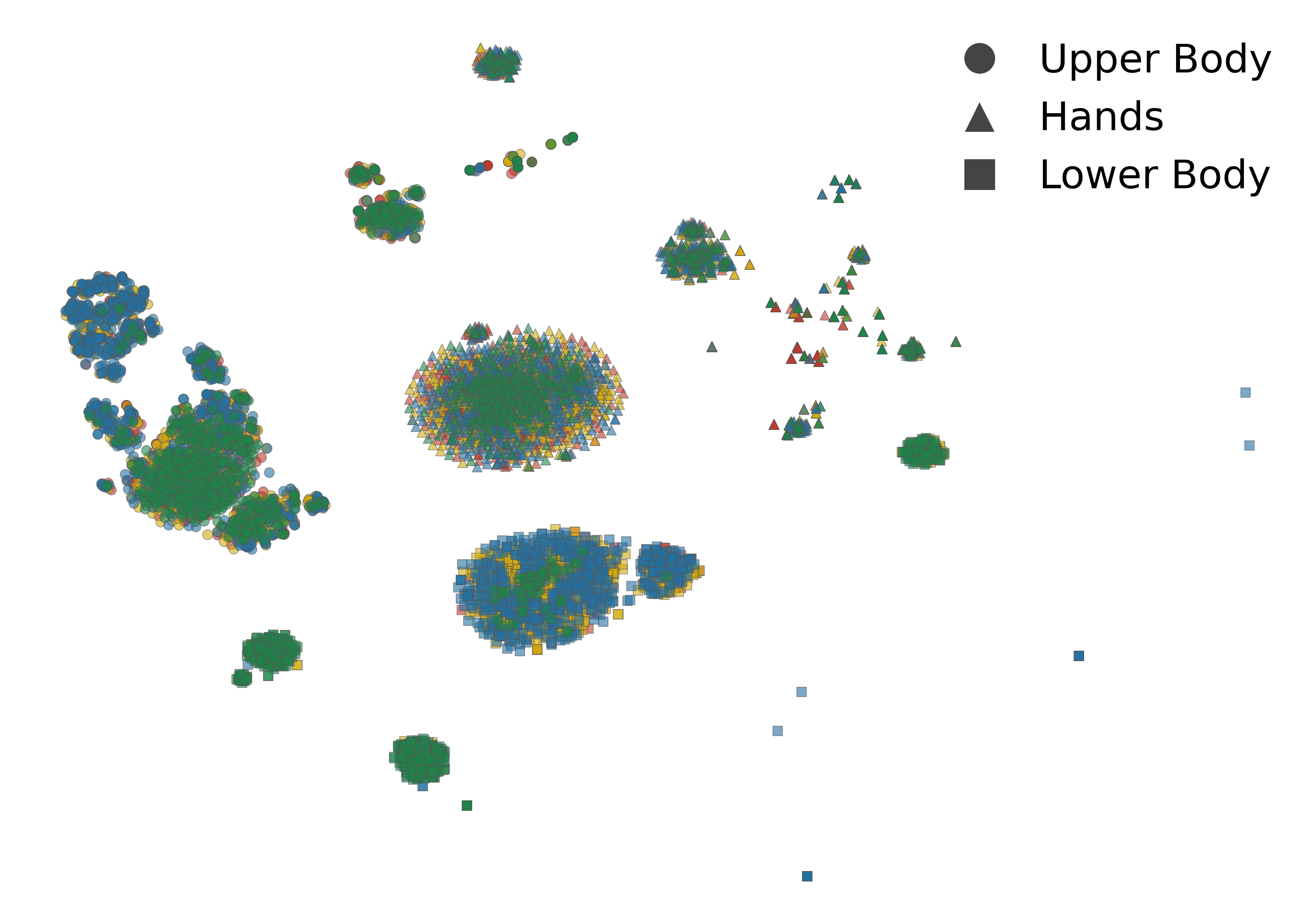

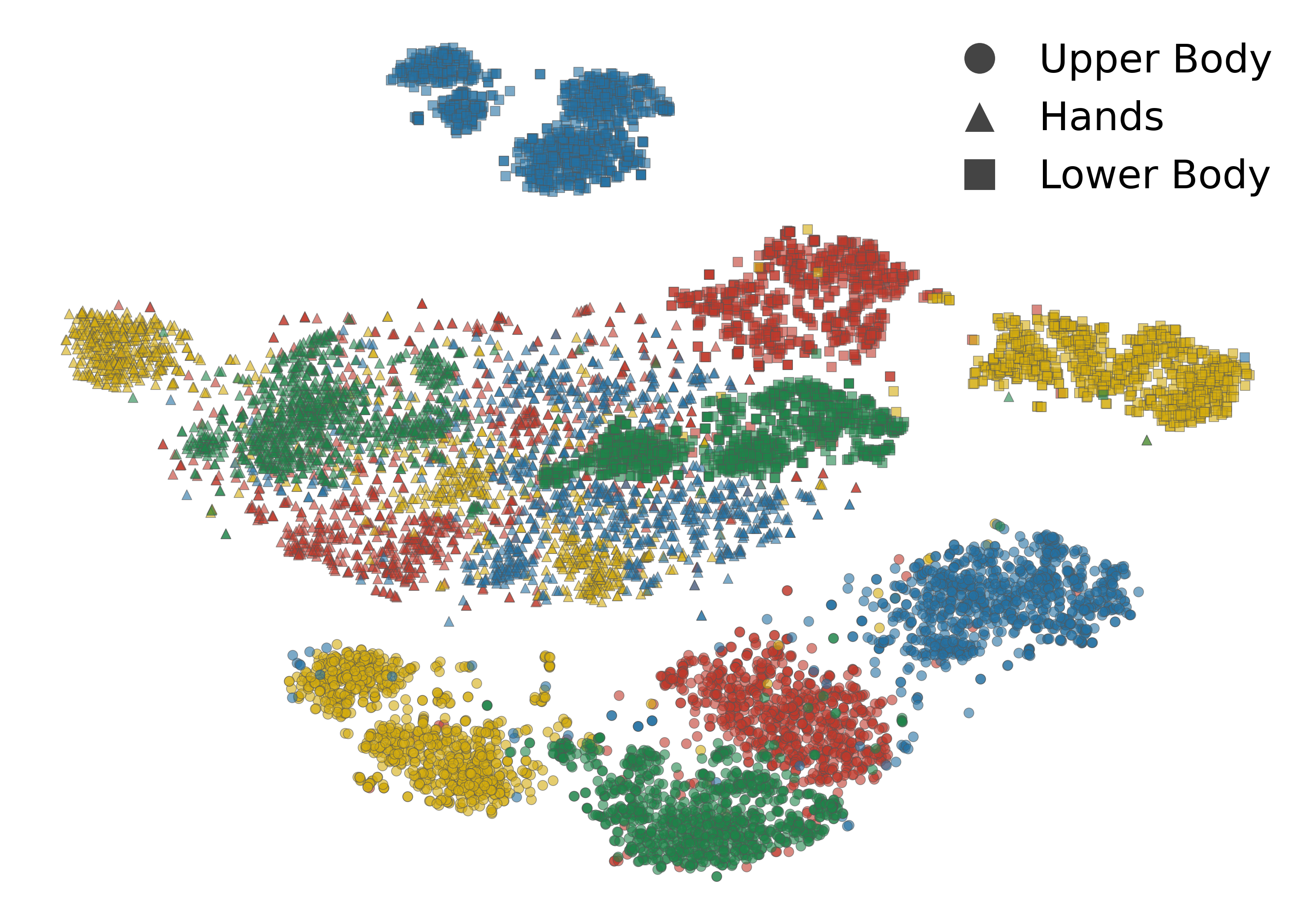

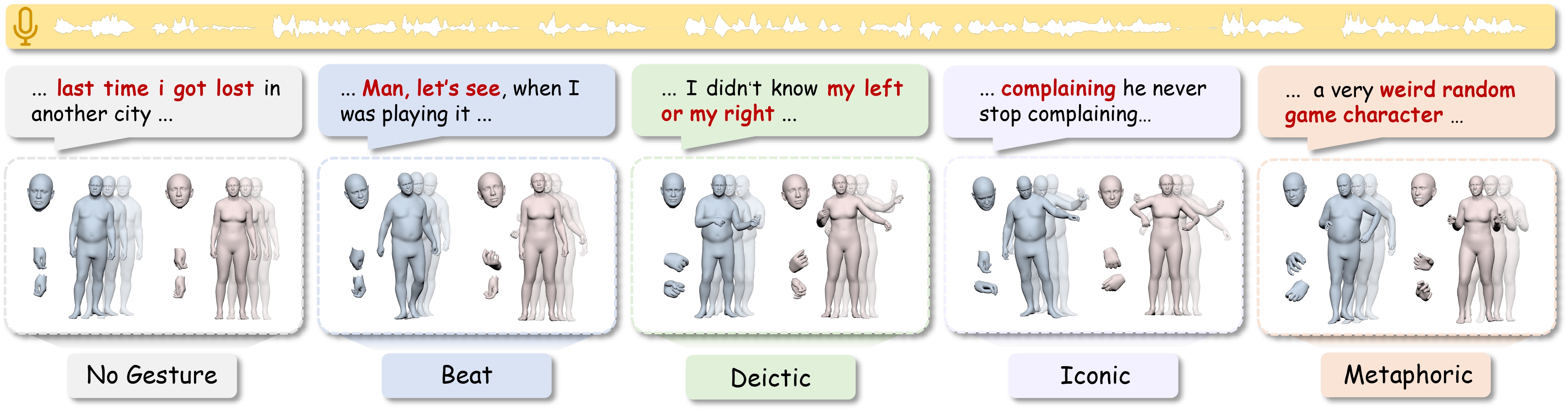

Prior methods encode gesture style as a global attribute, failing to separate what gesture to make from how to make it. PersonaGest explicitly disentangles content and style within a hierarchical motion representation, enabling both semantic coherence and personalization fidelity.